Let’s define this with precision: Theory of Automata is not about robots. It is the mathematical study of abstract machines and the languages they recognize. It answers the most fundamental questions in computing: What are the ultimate limits of computation? What problems can we even hope to solve with an algorithm? This past paper is your rigorous proof of understanding the very bedrock upon which all programming languages, compilers, and digital logic are built.

Forget writing code for a moment. This is about understanding the essence of computation itself. It’s the science of formal languages and the machines—automata—that process them, from the simplest switch to the most powerful computer imaginable.

What This Paper Actually Computes: Your Understanding of Computational Hierarchy

1. The Foundation: Languages and Grammars

Everything begins with the formalization of a language—not English or Python, but a set of strings over an alphabet (e.g., {0,1}) defined by precise rules.

- Regular Languages: The simplest tier. Defined by Regular Expressions (e.g.,

(0+1)*01for strings ending in ’01’) and recognized by the simplest machines. - Context-Free Languages: More powerful. Defined by Context-Free Grammars (CFGs) with production rules (e.g.,

S -> 0S1 | εfor the language0ⁿ1ⁿ). These model the syntax of programming languages.

You’ll be asked to convert between these representations, prove a language belongs to a class, or design a grammar/regex from a description.

2. The Machine Hierarchy: A Ladder of Computational Power

The core of the subject is a beautiful, stratified hierarchy of abstract machines, each more powerful than the last.

A. Finite Automata (FA): The Memoryless Recognizers

- Deterministic Finite Automata (DFA): The simplest useful model. A finite state machine with a read head. You’ll be given a language and must design a DFA that accepts it, often minimizing it using a table-filling algorithm. You’ll prove why certain languages (e.g., strings with an equal number of 0’s and 1’s) are not regular using the Pumping Lemma for Regular Languages.

- Nondeterministic Finite Automata (NFA) & ε-NFA: Machines that can be in multiple states at once or move without reading input. You’ll prove their equivalence to DFAs through subset construction.

B. Pushdown Automata (PDA): Adding a Stack

A finite automaton with a single, unbounded stack for memory. This is the machine for Context-Free Languages. You’ll design PDAs for languages like palindromes (wwᴿ), which are beyond the capability of any FA. You’ll understand the limitations of deterministic PDAs vs. nondeterministic PDAs.

C. Turing Machines (TM): The Ultimate Model of Computation

The most powerful automaton in the classical hierarchy—a finite control, a tape, and a read/write head. This is the formal definition of an algorithm.

- Design: You’ll design a TM to compute a simple function (e.g., incrementing a binary number, checking for

aⁿbⁿcⁿ). - Variants: Understanding that all variants (multi-tape, nondeterministic) are equivalent in power to the basic one-tape deterministic TM (Church-Turing Thesis).

- The Halting Problem: The monumental, paradigm-shifting result. You will prove, via diagonalization or reduction, that the Halting Problem is undecidable—no algorithm (TM) can exist that decides whether an arbitrary program halts on a given input. This is the gateway to understanding the limits of computation.

3. The Great Chomsky Hierarchy: The Map of Computation

You’ll navigate the entire landscape:

- Regular Languages — Recognized by Finite Automata.

- Context-Free Languages — Recognized by Pushdown Automata.

- Context-Sensitive Languages — Recognized by Linear-Bounded Automata.

- Recursively Enumerable Languages — Recognized by Turing Machines.

You’ll place languages in this hierarchy and understand the containment (each class is a subset of the one above) and separation (there are languages in one class not in the lower one).

4. Reduction and Decidability: The Frontier of the Computable

This is where theory meets profound philosophical reality.

- Decidable vs. Recognizable Problems: A problem is decidable if a TM always halts with a yes/no answer. It is recognizable (semi-decidable) if a TM halts for ‘yes’ cases but may loop for ‘no’ cases (like the Halting Problem itself).

- Reductions: The primary tool for classifying hardness. You’ll use a mapping reduction to prove a problem is undecidable by reducing a known undecidable problem (like Halting) to it. “If I could solve Problem X, I could solve the Halting Problem. Since Halting is unsolvable, X must be unsolvable too.”

The Paper’s Ultimate Challenge: Proof and Construction

The hardest questions are not multiple-choice. They are:

- A Proof: *”Using the Pumping Lemma, prove that the language L = {0ⁿ1ⁿ0ⁿ | n ≥ 0} is not context-free.”*

- A Complex Construction: “Design a Turing Machine that accepts the language of properly nested parentheses. Then, argue whether the problem of determining if a CFG is ambiguous is decidable.”

This requires deep synthesis of machine design, hierarchy knowledge, and proof technique.

How to Master This Past Paper:

- Think in Abstractions. See a DFA not as code, but as a mathematical object (a 5-tuple:

(Q, Σ, δ, q0, F)). Master the formal definitions. - Practice Drawing. Become an expert at sketching clean, clear state diagrams for automata and parse trees for grammars. A good diagram is a complete argument.

- Internalize the Pumping Lemmas. They are not just theorems to cite; they are adversarial games. You must be able to play the role of the demon choosing the string to be pumped and the defender choosing the decomposition.

- Trace Executions. Practice manually tracing inputs through your automata and Turing machines step-by-step. This exposes design flaws.

- Embrace Reduction. See it as a superpower for classifying problems. Practice reducing known problems (like

A_TM, the acceptance problem) to new ones.

This past paper is your certificate in computational theory. It proves you understand not just how to make a computer do something, but what is fundamentally possible for any computer to do. Passing it means you have peered into the theoretical foundations of your entire field and grasped the elegant, sometimes unsettling, limits of the machine.

Theory of automata final paper

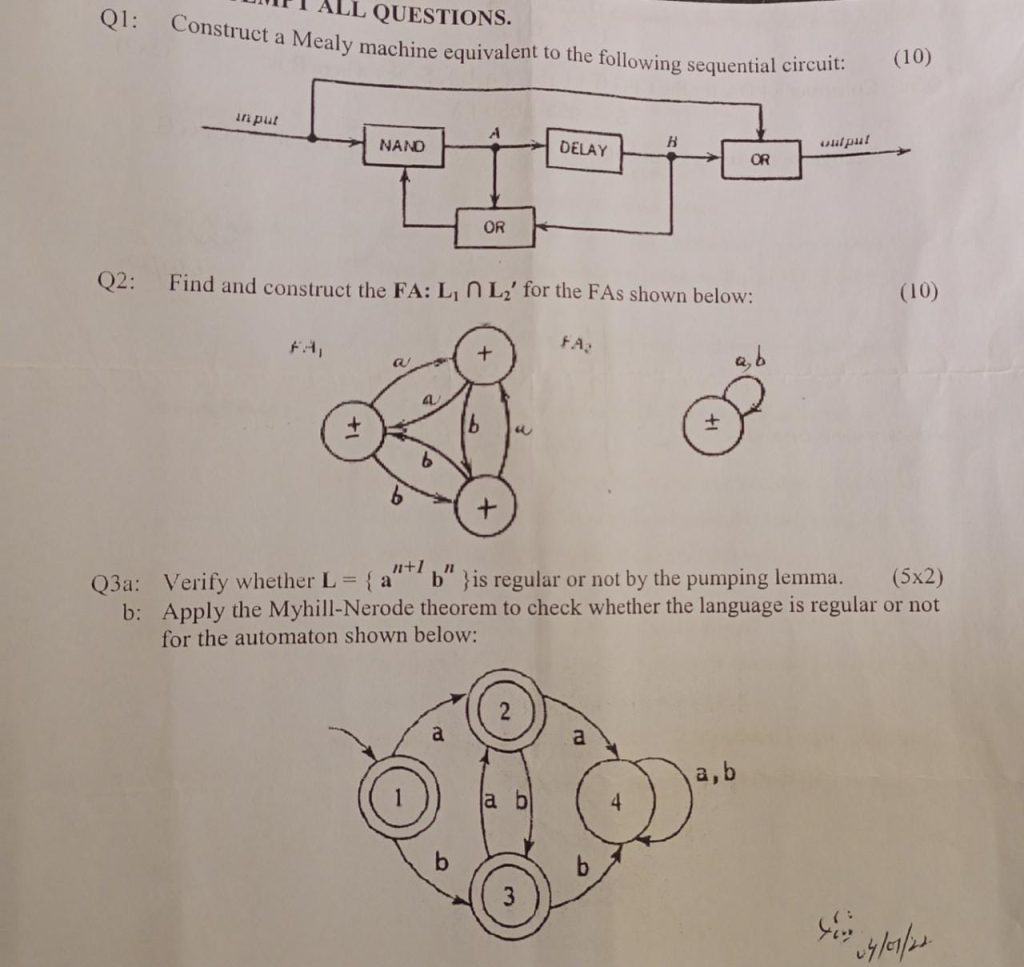

Q.1

Convert this following game scenario into NFA and then convert this NFA into a regular

expression.

The game of Flips is played with three coins. Initially they are all heads. A move consists of

flipping. Two coins simultaneously from whatever they were to the opposite side. For example,

flipping the end coins changes THH into HHT. We win when all three coins are tails. There are

eight possible states: HHH, HHT …. TTT. The only – is HHH; the only + is TTT. Draw this NFA,

the shortest word in this language is the shortest solution of this puzzle. What is it? Also tell how

many moves are in each winning sequence.

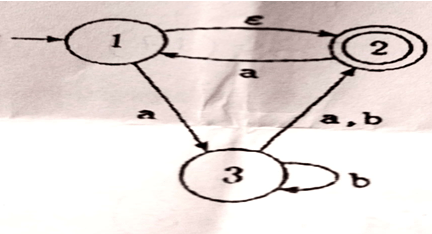

Q.2

Even though a CFG is not in regular form it still might generate a regular language. If so, this

means that there is another CFG that defines the same language and is in regular form. For the

following CFG’s find regular expressions that define the same language and describe the

language

a)

S—-→ aS| bX| a

X–→ aX| bY| a

Y–→ aY| a

b)

S–> aS| bX| a

X— aX| bY| bZ| a

Y-> aY| a

Theory of automata Mid term in 2021

Q1: a) Compute and mention all the words of length 6 and 7 for the Languages B*, Where

B = {zo oz}

- b) Also find if the string “zoozzoozzoozozozo” belongs to B*.

Q2: Build an FA that accepts Your Registration Number. Clearly mention all the symbols included in the sigma to describe the strings of its language.

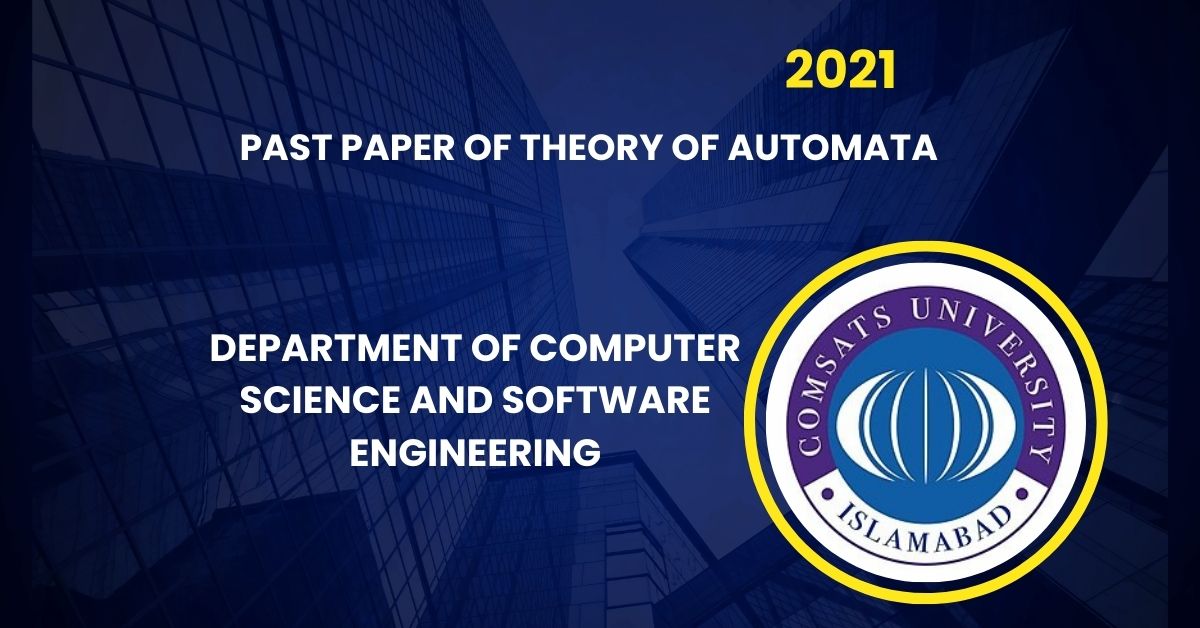

Q3: a) Construct (by Theorem) an equivalent DFA from the NFA given below:

b) Show that the same DFA will be constructed by applying the Algorithm to the NFA shown above.

Q4: Find the RE of the NFA given in Q3.

Q5: Apply Thompsons construction method to build an FA of the RE stated below:

a (ba) * b(^+a) b

Theory of automata Final Paper in 2022