Let’s get this straight right away: Software Testing is not about proving your code works. It’s about trying to prove it doesn’t—and doing so with ruthless creativity before your users do it for you. This past paper isn’t a dry checklist; it’s a simulation of the detective work, the strategic thinking, and the quality advocacy that separates a finished project from a professional product.

Forget the idea that testing is a boring, repetitive afterthought. This is where you switch from a builder’s mindset to a skeptic’s, a saboteur’s, and finally, an advocate’s mindset. It’s a disciplined hunt for the ghosts in the machine.

What This Paper Truly Tests: Your Quality Engineering Instincts

1. The Philosophy: Testing as Risk Management

The opening questions probe your understanding of why we test. You must articulate that testing is not about “finding all the bugs”—an impossible task—but about managing risk.

- Cost of Failure: What is the impact of a bug in a hospital infusion pump vs. a typo on a blog? Testing effort is proportional to consequence.

- Testing Objectives: You’ll distinguish between finding defects, providing confidence, preventing defects, and making business decisions (like release readiness).

2. The Foundational Taxonomy: Knowing Your Arsenal

You must flawlessly classify and select the right technique for the job.

- Static vs. Dynamic Testing: Reviewing code (static) without running it vs. executing the software (dynamic).

- Black-Box vs. White-Box vs. Grey-Box:

- Black-Box (Specification-Based): You test based on requirements, ignoring code. Techniques: Equivalence Partitioning, Boundary Value Analysis (BVA), State Transition Testing, Use Case Testing. Expect a question: “For a field accepting 1–100, define test cases using BVA and EP.”

- White-Box (Structure-Based): You test based on code structure. Techniques: Statement Coverage, Decision/Branch Coverage, Condition Coverage, Path Coverage. You’ll be given code and asked: “How many test cases are needed for 100% branch coverage?”

- Grey-Box: The pragmatic blend, using both internal structure and external specifications.

3. The Levels of Testing: A Staged Defense

Software is tested in layers, like a series of sieves with finer and finer mesh.

- Unit Testing: Testing individual functions/modules in isolation. You’ll discuss mocks, stubs, and drivers.

- Integration Testing: Testing interfaces between components. You’ll contrast Big Bang, Top-Down, Bottom-Up, and Sandwich approaches and their trade-offs.

- System Testing: Testing the complete, integrated system against requirements. This includes functional and crucially, non-functional testing: Performance, Load, Stress, Usability, Security.

- Acceptance Testing: The final gate. Alpha, Beta, UAT (User Acceptance Testing)—does it meet user needs and business goals?

4. The Critical Skill: Test Case Design & Documentation

This is the core of the exam. You won’t just name techniques; you’ll apply them.

- Writing Test Cases: Given a requirement, you’ll write clear, reproducible test cases with Test ID, Preconditions, Steps, Test Data, Expected Result, Actual Result, and Pass/Fail status.

- Traceability Matrix: Demonstrating that each requirement is covered by test cases. You might be asked to create a fragment of one.

5. The Modern Context: Automation & Lifecycle

- Test Automation: When to automate (stable features, repetitive execution) and when not to (frequently changing UI, exploratory testing). You might discuss the role of tools like Selenium, JUnit, or Cypress.

- Testing in Agile/DevOps: The shift from a separate “testing phase” to Continuous Testing. Concepts like Test-Driven Development (TDD) and testing within CI/CD pipelines.

- Metrics & Reporting: Interpreting metrics like Defect Density, Test Case Effectiveness, Escape Defect Rate. A question might give you data and ask: “What does this trend tell you about the quality of the last sprint?”

The Paper’s Real Challenge: Strategic Test Planning

The hardest questions present a scenario: “You are the test lead for a new e-commerce payment module. Outline your test strategy, including key risk areas, techniques you will employ at each level, and how you will assess test completion.”

This requires you to synthesize everything: risk analysis, technique selection, resource planning, and exit criteria.

How to Master This Past Paper:

- Think Like an Adversary. For any given feature, ask: “What’s the least obvious way to break this?” Consider edge cases, invalid inputs, and unexpected user behavior.

- Practice the “Technique -> Application” Link. Don’t just memorize “Boundary Value Analysis.” Practice applying it to 5 different examples: numbers, dates, string lengths, dropdown lists.

- Master the Vocabulary. Know the precise difference between Verification & Validation, Error vs. Fault vs. Failure, Retesting vs. Regression Testing.

- Draw Diagrams for State-Based Testing. For workflows (e.g., login, checkout), draw the state transition diagram first. It makes deriving test cases systematic.

- Justify Your Choices. In essay questions, always explain why you chose a technique. “I will use Equivalence Partitioning here to reduce the thousands of possible input values into a manageable set of representative classes.”

This past paper is your certification in quality assurance. It proves you have the methodical rigor to hunt for flaws and the strategic mind to ensure a product is not just delivered, but delivered with integrity. Passing it means you are the last line of defense—and the first advocate for the user.

Software testing Mid Term Examination 2021

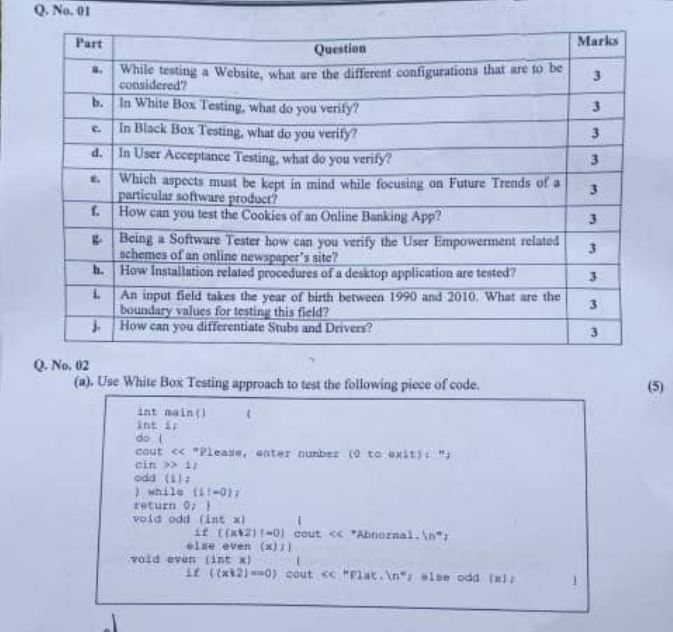

Q1:

- An input field takes the year of birth between 1970 and 2010. What are the boundary values for testing this field?

- Differentiate White g Box and Black Box Testing at-least with 3 examples of each?

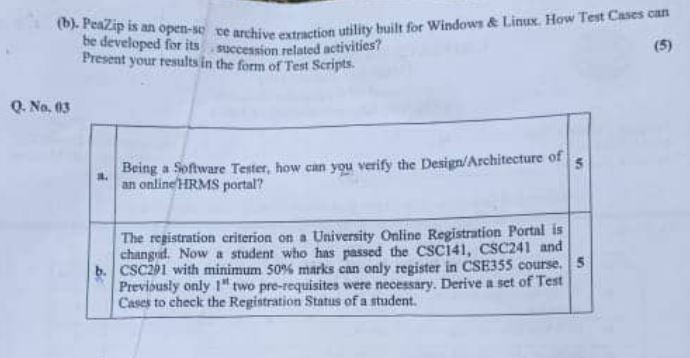

Q2:

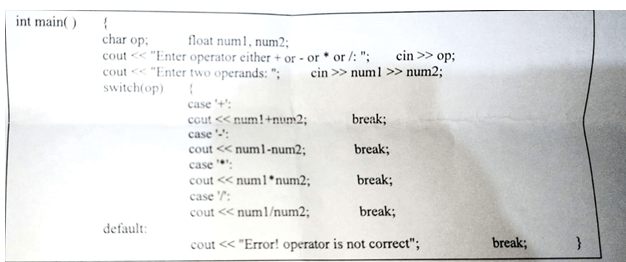

- Use White Box Testing approach to test the following piece of code.

Which are the different activities that are carried out in STLC Process?